Michael Behe has a new book called Darwin Devolves, published by HarperOne. Nathan Lents, Joshua Swamidass, and I wrote a review of that book for the journal Science. (You can find an open-access version of our review here.) As our review says (in agreement with Behe), there are many examples of evolution in which genes and their functions have been degraded, sometimes yielding an advantage to the organism. Unfortunately, though, Behe largely ignores the ways that evolution generates new functions and thereby produces complexity. That’s a severe problem because Behe uses the evidence for the ease of gene degradation to support his overarching implication that our current understanding of the mechanisms of evolution is inadequate and, consequently, the field of evolutionary biology has a “big problem” and is therefore in scientific trouble.

I hope to accomplish several things in a series of posts. (I initially planned to write three posts, but it will now be more than that, as I delve deeper into several issues.) In my first post, I explained why Behe’s so-called “first rule of adaptive evolution” does not imply what he says it does about evolution writ large. In summarizing, I wrote that Behe is right that mutations that break or blunt a gene can be adaptive. And he’s right that, when such mutations are adaptive, they are easy to come by. But Behe is wrong when he implies these facts present a problem, because his thesis confuses frequencies over the short run with lasting impacts over the long haul of evolution.

In this post, I take a closer look at Behe’s “rule” and how one might decide whether or not a particular mutation is damaging to a particular gene in a particular context. I’ll then describe and discuss the example that Behe chose to illustrate his argument at the outset of his book, calling attention to the fact that his inferences were indirect, and as a result a key conclusion was quite possibly wrong. [These issues came to my attention based on work by Nathan Lents, Art Hunt and Joshua Swamidass. They voiced concerns about this example on their own blogs, here and here. I’ve now done my own reading, and in this post I attempt to provide just a tiny bit of important technical background before addressing the main concern, as I see it.]

II-A. How does one know if a mutation has damaged a gene?

Behe’s first rule of adaptive evolution says this: “Break or blunt any functional gene whose loss would increase the number of a species’ offspring.” Every biologist knows that many mutations break or reduce the functionality of genes and the products they encode. Every biologist also realizes that this can sometime increase an organism’s fitness (i.e., its survival and reproductive success), in particular when two conditions are met. First, the function has to be one that is not—or rather, no longer—useful to the organism. For example, eyes are no longer useful to an organism whose ancestors lived above ground, but which itself now lives in perpetual darkness in a cave. Second, there must be a meaningful cost to the organism (again, in the currency of fitness) of having the functional form of the gene, and that cost must be reduced or eliminated for the mutated version of the gene. This second point means that mutations that break or blunt a particular gene—even one that is useless—are not necessarily advantageous; they might instead be selectively neutral, such as when an encoded protein is still expressed but, for example, has diminished activity on a substrate that isn’t even present. Therefore, compelling evidence for a broken or blunted gene in a particular lineage suggests that the gene’s function is under what evolutionary biologists call “relaxed” selection—relaxed because some capability that was useful during the history of a lineage is no longer important under the organisms’ present circumstances. However, that does not mean that the loss or diminution of the capability necessarily provided any advantage; instead, the gene could have decayed by the random fixation of mutations that were entirely inconsequential for fitness.

Two very important issues center on (i) how an observer can tell whether a particular mutation breaks or blunts a gene; and (ii) how that observer can determine whether the resulting mutation is advantageous. In short, neither inference is ironclad without an in-depth case-by-case investigation, although there are shortcuts that biologists often take because they make sense and are often sound, provided one takes care to understand the potential limitations of the inference. To characterize the biochemical consequences of a mutation, for example, the gold standard would be to perform detailed analyses of the activities of proteins encoded by different forms (alleles) of the same gene. That’s difficult, technical work.

But as I said, there are shortcuts that allow scientists to draw reasonable inferences in some cases. For example, a mutation that generates a premature stop codon (a so-called “nonsense” mutation) usually eliminates the encoded protein’s function. However, there are exceptions, such as when the premature stop is very near the end of the gene. It’s also possible that a truncated protein might even have some new activity and function, or that it might accumulate additional mutations that produce a new activity. That’s unlikely in any one case, but a lot of unlikely things can happen over the vast scales of space and time over which evolution has operated. As the Nobel laureate François Jacob famously wrote years ago, “natural selection does not work as an engineer works. It works like a tinkerer—a tinkerer who does not know exactly what he is going to produce but uses whatever he finds around him whether it be pieces of string, fragments of wood, or old cardboards; in short, it works like a tinkerer who uses everything at his disposal to produce some kind of workable object.”

At the other end of the spectrum with respect to inferred functionality, some mutations change the DNA sequence of a gene, but they have no affect on the resulting amino-acid sequence of a protein. That happens because the genetic code is redundant, with multiple codons for the same amino acid. Such mutations are called “synonymous” and they are generally presumed to be neutral precisely because they don’t change a protein. Once again, however, there are some exceptions to this usually reliable inference; a synonymous mutation could affect, for example, the rate at which the protein is produced and even its propensity to fold into a specific conformation.

In the middle ground between these (usually) clear-cut extremes are the cases where a mutation produces an amino-acid substitution in the encoded protein. Does that mutation change the protein’s activity? If it does, is it necessarily damaging to the protein and/or to the organism with that altered protein? Biochemical and structural studies of proteins have shed light on this issue by identifying so-called “active sites” of many proteins—positions in the structure of a protein molecule where it interacts with a substrate and facilitates a chemical reaction. Mutations in and around active sites are more likely to affect a protein’s activity than ones that are far away. Also, even at the same site in a protein, different mutations are likely to have more pronounced affects on the protein’s activity, depending on whether the substitution affects the charge and/or size of the amino acid at that site.

Computational biologists have developed tools that take into account these types of information, which can be used to draw tentative inferences or make predictions about the likely effect of a specific mutation. Not surprisingly, one application is for understanding possible health effects of genetic variation in humans. For example, are certain variants in some gene likely to affect an individual’s susceptibility to cardiovascular disease?

One such tool is called PolyPhen-2. The website says: “PolyPhen-2 (Polymorphism Phenotyping v2) is a software tool which predicts possible impact of amino acid substitutions on the structure and function of a human proteins using straightforward physical and comparative considerations.” In addition to using structural information described above, it also uses information on whether a given site is highly conserved (little or no variation) or quite variable across humans and related species for which we have information. Why does it use that information? In essence, the program assumes that evolution has optimized a given protein’s activity for whatever it does in humans, related species, and our common ancestors. If a particular site in a protein varies a lot, according to that implicit assumption, the variants probably aren’t harmful because, well, if they were, then those lineages would have died out. If a site is hardly variable at all, by contrast, it’s presumably because mutants at those sites damaged the protein’s important function and led to the demise of those unfortunate lineages.

All that makes a lot of good sense … provided the protein of interest is performing the same function, and with the same optimal activities, in everybody and every species used in the analysis. Let’s look now at a specific case that Behe chose to highlight in his book.

II-B. The APOB gene in polar bears

Behe sets the stage for his rule—“break or blunt any functional gene whose loss would increase the number of a species’ offspring”—by summarizing the results of a study by Shiping Liu and coauthors that compared the genomes of polar bears and brown bears. Their paper examined mutations that distinguish these two species. The authors identified a set of mutations that had accumulated along the branch leading to modern polar bears, and in a manner that was consistent with those changes having been beneficial to the polar bears. One of the mutated genes, which was discussed in some detail both by the paper’s authors and by Behe, is called APOB. As Liu et al. wrote (p. 789), the APOB gene encodes ApoB, “the primary lipid-binding protein of chylomicrons and low-density lipoproteins (LDL) … LDL cholesterol is a major risk factor for heart disease and is also known as ‘bad cholesterol.’ ApoB enables the transport of fat molecules in blood plasma and lymph and acts as a ligand for LDL receptors, facilitating the movement of molecules such as cholesterol into cells … The extreme signal of APOB selection implies an important role for this protein in the physiological adaptations of the polar bear.”

As part of their study, Liu et al. analyzed the polar-bear version of the APOB gene using the PolyPhen-2 computational tool described above. Roughly half the mutations in APOB were categorized by that program as “possibly damaging” or “probably damaging,” and the rest were called “benign.” Behe than concluded that some of the mutations had damaged the protein’s function, and that these mutations were beneficial in the environment where the polar bear now lives. In other words, Behe took this output as strong support for his rule.

So what’s the problem? The PolyPhen-2 program, as I explained, is designed to identify mutations that are likely to affect a protein’s structure and therefore its function. It assumes such mutations damage (rather than improve) a protein’s function because structurally similar mutations are rare in humans and other species used for comparison. It does so because it presumes that natural selection has optimized the protein to perform a specific function that is the same in all cases, so that changes must be either benign or damaging to the protein’s function. In fact, the only possible categorical outputs of the program are benign, possibly damaging, and probably damaging. The program simply cannot detect or suggest that a protein might have some improved activity or altered function.

The authors of the paper recognized these limiting assumptions and their implications for the evolution of polar bears. In fact, they specifically interpreted the APOB mutations as follows (p. 789): “… we find nine fixed missense mutations in the polar bear … Five of the nine cluster within the N-terminal βα1 domain of the APOB gene, although the region comprises only 22% of the protein … This domain encodes the surface region and contains the majority of functional domains for lipid transport. We suggest that the shift to a diet consisting predominantly of fatty acids in polar bears induced adaptive changes in APOB, which enabled the species to cope with high fatty acid intake by contributing to the effective clearance of cholesterol from the blood.” In a news piece about this research, one of the paper’s authors, Rasmus Nielsen, said: “The APOB variant in polar bears must be to do with the transport and storage of cholesterol … Perhaps it makes the process more efficient.” In other words, these mutations may not have damaged the protein at all, but quite possibly improved one of its activities, namely the clearance of cholesterol from the blood of a species that subsists on an extremely high-fat diet.

It appears Behe either overlooked or ignored the authors’ interpretation. Determining whether those authors or Behe are right would require in-depth studies of the biochemical properties of the protein variants, their activities in the polar bear circulatory stream, and their consequences for survival and reproductive success on the bear’s natural diet. That’s a tall order, and we’re unlikely to see such studies because of the technical and logistical challenges. The point is that many proteins, including ApoB, are complex entities that have multiple biochemical activities (ApoB binds multiple lipids), the level and importance of which may depend on both intrinsic (different tissues) and environmental (dietary) contexts. In this example, Behe seems to have been too eager and even determined to describe mutations as damaging a gene, even when the evidence suggests an alternative explanation.

[The picture below shows a polar bear feeding on a seal. It was posted on Wikipedia by AWeith, and it is shown here under the indicated Creative Commons license.]

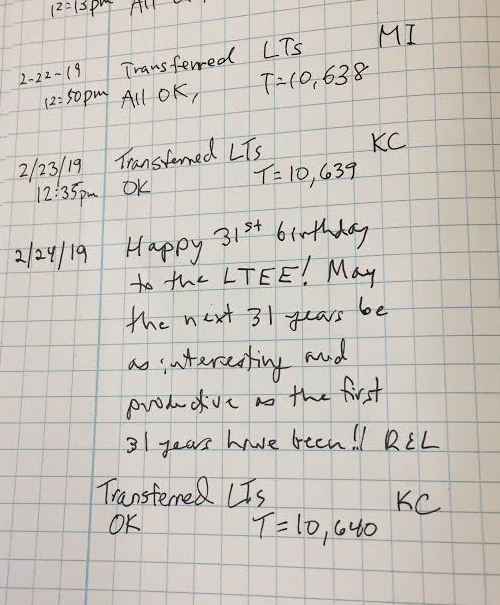

It also happens to be daily transfer number 11,000 for the experiment. But wait, you ask: Is 365 x 32 really equal to 11,000? (Not to mention the complication of leap years.)

It also happens to be daily transfer number 11,000 for the experiment. But wait, you ask: Is 365 x 32 really equal to 11,000? (Not to mention the complication of leap years.)